Final Exam Review

Train and hope was described by T.F Stokes and Donald Baer as:

“After a behavior change is effected through manipulation of some response consequences, any existent generalization across responses, settings, experimenters, and time, is concurrently and/or subsequently documented or noted, but not actively pursued.”

What does this mean?

Some analysts fall into the trap of simply “hoping” that generalization will occur without actively programming for it - as described in their original article, there are at least 7 suggested methods by which an analyst can actively promote generalization of a skill across time, people, and places.

Sequential Modification - when transferring stimulus control from one setting to another, allow for small successive approximations from the initial comntrolling stimulus to progressively different variations of the stimulus until, eventually, the dimensions of the SD have been altered sufficiently and the terminal stimulus has effective control of the target behavior.

Introduce to Natural Maintaining Contingencies - plan to fade any artificially dense schedule of reinforcement towards the active schedule in the client’s natural environment.

Train Sufficient Exemplars - introduce the learner to sufficient variants of the target stimuli such that reinforcement has been contacted for engaging in the response when a wide variety of non-critical elements of the SD / relevant dimensions for stimulus transfer have been varied or removed - i.e., tacting “bird” when shown a variety of birds of different colors, shapes, sizes (all non-critical elements of the class of animal) whereas some features will likely be consistent across all exemplars (has wings, a beak, feathers, etc.)

Train Loosely - little or no effort is made to control (in an experimental / highly consistent manner) the presentation of the stimuli and the range of acceptable answers is broadened.

Use Indiscriminable Contingencies - during teaching, use or fade towards intermittent schedules of reinforcement to mirror the likely schedule(s) that will be encountered naturalistically. Additionally, if necessary, make it hard to determine when the behavior will contact reinforcement and when it will not, to promote responding even in contexts where reinforcement is not likely to occur.

Program Common Stimuli - plan to use stimuli that are relevant to the learner and will likely be encountered in the day-to-day natural environment. For example, if you are teaching the child tacts, teach them first things they see on their daily routine - their lunchbox, a doorknob, a car, etc.

Mediating Generalization - establishing a sufficiently broad response when learning a new skill that it is likely to be employed in other types of skills - i.e., a child who is being taught how to cut on a dashed line to make animal cut-outs allows a learner to cut out different shapes of animals, which may include untrained exemplars.

Train to Generalize - consider generalization itself an operant that can be reinforced. When the learner engages in novel generalized behavior, provide reinforcement for that behavior (or differential reinforcement for approximations of generelozed responding).

Sequential Modification - when transferring stimulus control from one setting to another, allow for small successive approximations from the initial comntrolling stimulus to progressively different variations of the stimulus until, eventually, the dimensions of the SD have been altered sufficiently and the terminal stimulus has effective control of the target behavior.

Introduce to Natural Maintaining Contingencies - plan to fade any artificially dense schedule of reinforcement towards the active schedule in the client’s natural environment.

Train Sufficient Exemplars - introduce the learner to sufficient variants of the target stimuli such that reinforcement has been contacted for engaging in the response when a wide variety of non-critical elements of the SD / relevant dimensions for stimulus transfer have been varied or removed - i.e., tacting “bird” when shown a variety of birds of different colors, shapes, sizes (all non-critical elements of the class of animal) whereas some features will likely be consistent across all exemplars (has wings, a beak, feathers, etc.)

Train Loosely - little or no effort is made to control (in an experimental / highly consistent manner) the presentation of the stimuli and the range of acceptable answers is broadened.

Use Indiscriminable Contingencies - during teaching, use or fade towards intermittent schedules of reinforcement to mirror the likely schedule(s) that will be encountered naturalistically. Additionally, if necessary, make it hard to determine when the behavior will contact reinforcement and when it will not, to promote responding even in contexts where reinforcement is not likely to occur.

Program Common Stimuli - plan to use stimuli that are relevant to the learner and will likely be encountered in the day-to-day natural environment. For example, if you are teaching the child tacts, teach them first things they see on their daily routine - their lunchbox, a doorknob, a car, etc.

Mediating Generalization - establishing a sufficiently broad response when learning a new skill that it is likely to be employed in other types of skills - i.e., a child who is being taught how to cut on a dashed line to make animal cut-outs allows a learner to cut out different shapes of animals, which may include untrained exemplars.

Train to Generalize - consider generalization itself an operant that can be reinforced. When the learner engages in novel generalized behavior, provide reinforcement for that behavior (or differential reinforcement for approximations of generelozed responding).

-|-

ESSAY RESOURCES

Rodrigo is teaching a client to wash her hands. Rodrigo completes all the steps to washing hands for his client using hand-over-hand prompting, but on the final step, he uses prompting and reinforcement until his client is independently completing the final step. When that step is mastered, he begins teaching the second to last and continues to take data on the last step as "maintenance." He continues in this manner, moving backwards in the chain, until the client is independently completing the entire task.

What type of teaching procedure is this?

\n

-|-

What things are important to do before intervention?

- Reinforcer assessment: Survey, ask the child, observe the child in a free play environment

- Afterwards, identify powerful reinforcers

- Quickly and immediately and consistently reinforce

- Dense schedule of reinforcement when teaching a skill

Four Stages of Learning

- Acquisition: This is the first stage in learning.The patient is starting to be able to complete the target skill correctly, but is not yet accurate or fluent in the skill. The goal in therapy at this stage is to improve accuracy. Lots of help is required and lots of motivational support is required for the child to begin to develop the skill. In acquisition, we typically work on the topography and accuracy of the behavior, although other behavioral dimensions can also be targeted.

- Fluency: This is the second stage in learning. The patient is now able to complete the target skill accurately but they work slowly and thoughtfully in order to do so. The goal of this phase in therapy is to increase the patient’s speed of responding so they can demonstrate the skill independently with high accuracy and increased speed. When the patient is in the stage of developing fluency, we typically target speed and accuracy.

- Generalization: Generalization is the third stage of learning. It is the process of demonstrating a skill across different people, places, and materials. It is not enough for a client to just demonstrate acquisition and fluency in a kill if they only ever use that skill in your sessions with them, or at your clinic and not across other environments.

- Maintenance: We want the child to learn the behavior well, so they don’t forget how to do it between sessions or at the end of our time working with the child. We want the behavior to be maintained across the lifespan. If we are targeting a behavior that is not needed across the lifespan, then maybe we should re-evaluate that! We want children to learn behavior that will have long-term impact in improving their lives. When the child is entering maintenance, we typically don’t have as many sessions that target that skill, or we start relying on other people to keep the skill in practice so that it becomes a skill that will not fade away. Maintenance is the last stage of learning that we target in therapy.

- Adaptation: Adaptation is the final stage of learning, in which the child spontaneously adapts the behavior to a stimuli or environment through determining aspects of the environment or behavior that need to be changed in order to successfully achieve an outcome.

USE THE BIKE EXAMPLES

ACQUISITION BIKE EXAMPLE

- When we want a child to learn to ride a bike, we first make it as easy as possible. We put training wheels on the bike and give lots of practice with the training wheel independently. Which stage of learning is this?: This is acquisition. In acquisition, we typically work on the topography and accuracy of the behavior, although other behavioral dimensions can also be targeted. The acquisition is the first stage of learning. In this stage, the behavior is not well formed, or doesn’t exist at all at the beginning. Lots of help is required and lots of motivational support is required for the child to begin to develop the skill. We are willing to accept some exemplars of behavior that are no quite what we want to see as the end product.

FLUENCY BIKE EXAMPLE

- Our student no longer needs training wheels and dad’s help is not needed. The student doesn’t fall off the bike and doesn’t need our help anymore. They can still continue to refine their speed and accuracy, but the behavior is approaching the goal for the topography. What stage of learning is the child in?: The child is in the stage of developing fluency, where we typically target speed and accuracy. We want children to achieve fluency with the skills they acquire--this means they can demonstrate the skill independently with high accuracy and increased speed.

GENERALIZATION BIKE EXAMPLE

- We want the student to be able to ride a bike at grandma’s or on vacation, even if it is a different color or style. And we want the child to demonstrate the skill with other people in their life, not just their dad or therapist. What stage of learning is this?: This is generalization, which is the process of demonstrating a skill across different people, places and materials

ADAPTATION BIKE EXAMPLE

- Our student moves from riding a bike to riding a unicycle. They also move from a one-gear bike to a 10 gear bike with handbrakes, or an elliptical bike. What stage of learning is this?: This is adaptation, the final stage of learning. The student spontaneously adapts the behavior to a stimuli or environment through determining aspects of the environment or behavior that need to be changed in order to successfully achieve an outcome

Schedules of Reinforcement

What are schedules of reinforcement related to?: Schedules of reinforcement are related to the stage of learning and the “closeness” to the criteria you have set. As you start to approach the criteria, you fade back on reinforcement frequency, and build in natural reinforcers (or those available in the natural environment.”

The earlier in learning, the more the reinforcement. Early in the treatment, we reinforce every correct response. This is called continuous reinforcement.

After we feel the behavior has been demonstrate more times correctly (or at criteria) than incorrectly, we switch to intermittent reinforcement.

What effects on responding does continuous reinforcement have?: Continuous reinforcement has a steady, high rate of performance as long as reinforcement follows every response. A high frequency of reinforcement may lead to early satiation. Behavior weakens rapidly when reinforcement is withheld. This is appropriate for newly emitted, unstable, low-frequency responses.

What effects on responding does intermittent reinforcement have?: in intermittent reinforcement, there is a low frequency of reinforcement that produces early satiation. It is capable of producing high frequencies of responding. It is also appropriate for stable or high-frequency responses

When we fade reinforcers, we do it gradually

Why do we want to reduce reinforcer enjoyment?: Because reinforcer enjoyment takes away from teaching time, we want to reduce that over time.

Why do we “thin” or “fade” reinforcement?: We thin or fade reinforcement because we want the child to do the behavior without artificial motivation. We want the child to demonstrate the behavior in the correct context when we are not there to reinforce it, and for natural reinforcers to take over for that behavior. We also want to maximize our instructional episodes, or trials, so that we can get as much practice as possible on many different skills as possible. Because reinforcer enjoyment takes away from teaching time, we want to reduce that over time.

Build fluency

Fade reinforcer to natural level (what the child will experience)

Then it naturally reinforces

Variable and Fixed

Interval and ratio

What schedule of reinforcement most stable: variable ratio (double check)

Teaching a new skill: Shaping

What do you do when you’re shaping a behavior?: Differential reinforcement

-|-

- If you have skills but the child doesn’t put it together in a complex behavior, you use chaining. The child does it independently without prompts

- Forward chaining, backwards chaining, total task prompts

- How do we deal with reinforcer potency?

- Competing contingencies

- Establishing operations makes it easier

- Abolishing operations makes it harder

- How do we get the kid to do the behavior when shaping?: prompt hierarchies, most to least if clueless

- Advantages and disadvantages

- Prompting

- Fading

- Fade prompts fast to get to natural discriminative stimulus

- What is a contingency?: If then statement

- Discriminate between when to do or not to do the behavior

- Generalize behavior between people, places and things

- Make sure behavior is maintained

Problems

- Antecedent control: Setting up situation to prevent behavior from happening next time

- 4 Cs of prevention: Catch them being good, clarity(KISS), choices, and communication

- Surface management techniques

- Social stories REVIEW THOROUGHLY LATER LMAO

- Setting up the environment to prevent behavior from being needed

- Set up opportunities for child to get the function they need without resorting to problem behavior

- DRO, DRI, DRA, DRL

- DRO: Absence

- DRI: Incompatible behavior

- DRA: Alternative behavior

- DRL: Low rates

- 4 basic functions: Escape, attention, make a demand, self stimulation, automatic reinforcement

9

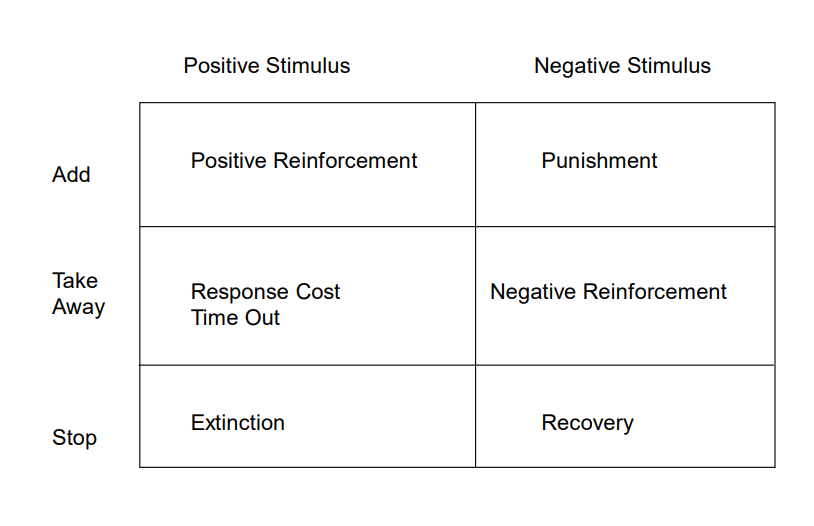

Behavioral reduction strategies: punishment and reinforcement

Hierarchy of intrusiveness

Aversive procedures

Stay high up on hierarchy to preserve relationship with child

Take data when using punishment for ethical purposes; if it doesn’t cause behavior to go down, it is not ethical

Fair pair rule: teach alternative behavior for every behavior we want to get down to zero

Discrete Trial Training history

Discrete Trial Training Pros and Cons

Compliance training

Stimulus equivalence

Tangible reinforcers problems: satiation and access outside the training environment and cost

Establishing operations

5 Cs of responding: Look at your CONSEQUENCES (Are you actually reinforcing/discouraging the behavior you are addressing? What is the function of the behavior? Natural? Related to the behavior? Proporitonate to the offense?).Make the consequences IMMEDIATE and CONTINGENT upon the occurrence of the behavior you are addressing. Be CONSISTENT (across episodes, people and settings) with consequences. CATCH the child being good. BE CALM (neutral tone, adult time away)

Stages of PECS

- Phase1: Physical Exchange. Goal is to increase spontaneity. No verbal prompts. Variety of trainers. In addition to structured training trials, create at least 30 opportunities for spontaneous requesting during functional activities. Backward chaining. Move to phase II when student is successful on 80% of trials.

- Phase 2: Distance and Persistence. Goal is to increase spontaneity. Vary trainers. Introduced spontaneous opportunities to request during functional activities. Remove the picture from board. Allow free access to reinforcer for 10-15 seconds. The student must now pull the picture off the strip and hand it to the partner. Use physical assistance minimally. Partner begins to move backward from the child when he begins the pass. Begin to increase the distance from the child to the book. Vary only one thing at a time initially.

- Phase 3: Discrimination. Set up situation where communication would be likely. Pictures of preferred vs non-preferred item; contextually appropriate vs irrelevant object. When child selects appropriate picture, reinforce with praise and object… When child selects irrelevant/non-preferred picture, give child the object with no verbal/social reaction. Error correction techniques. Correspondence checks. The purpose is discrimination training. use context in which communication would be likely. Introduce second picture to display. Picture should be of non-preferred item, deliver item the child passes to partner, regardless of whether it is really what he wants and vary placement.

- Phase 4: Sentence structure expansion. Sentence structure. Start with “I want” picture on strip and child adds object/activity, then passes strip. Move on when child independently makes sentence and hands to partner on 80% of opportunities

- Phase 5: Answering Questions/ Responding to “what do you want?” Partner simultaneously points to “I want” and asks “what do you want?” Introduce delay into prompt, 1 secc per success. The goal is for the child to consistently beat the verbal prompt and then fade the point

- Phase 6: Commenting. The beginning of social speech. Use “I see” picture

- PECs Advantages: Everyone understands, reinforcers are contextual, can work on complexity with non-verbal children. Quick access to R+ for communication. Simple, inexpensive.

- Disadvantages of PECs: Can become unwieldy, may delay speech, cost, mechanism for transfer to vocal speech, only addresses communication and prep time

- \

Compare and contrast DTT and IT

Compare and contrast least to most and most to least prompting

Classes of reinforcers and pros and cons to each type of reinforcer.

Primary reinforcers: A person’s natural response to a stimulus, It’s an unconditioned, or neutral stimulus

Secondary reinforcers: Must be learned, or conditioned. Secondary reinforcers are a type of positive reinforcement

DRAW THIS OUT YOU DIPSHIT

-|-

We started out by defining behavior and what it is not. How do we describe it? We operationalize it so it’s measurable and observable.

Behavior has dimensions: 7 behavioral dimensions

Dimensions DESCRIBE BEHAVIOR

FUNCTIONS DESCRIBE OUTCOMES: Rate, frequency, latency, accuracy, topography, duration

Take data on the behavior you’re going to change

Write up an objective that has 3 important components: Context, topography and criteria

Partial Prompt

Professor Daly says “Two important components of a behavioral objective start with C.” What is this an example of?: When Professor Daly says that “Two important components of a behavioral objective start with C.”, this is an example of partial prompting. She is prompting us by giving us a hint.

Your objective should say what the child should do rather than what the child should not do. Why?: Your objective should say what the child should do rather than what the child shouldn’t do because it has to pass the Dead Man’s Test. Every objective should target behavior and the behavior can be determined by whether or not a dead man is capable of doing it. If a dead man can do it, it is not a behavior.

What do you need to get before you start data intervention?: Before you start data intervention, you need a baseline. You need to know what the behavior is like and you can’t rely solely on the parent or the teacher.

What do we do to set criteria?: When we set criteria, we need to assess what’s acceptable to everybody and culturally appropriate. Criteria should be aimed at bringing the child into the average range and considering what’s above chance. Most importantly, the criteria has to be safe and functional.

What do we need to do before an intervention?: Before we perform an intervention, we need to conduct a reinforcer assessment. This could be a forced choice, survey or observation on the child.

What are examples of reinforcer assessments?: Forced choice, survey, observe the child in a free play environment

Then identify your powerful reinforcers

Afterwards you set up situations that make the behavior more likely to happen

QUICKLY and IMMEDIATELY and CONSISTENTLY reinforce

Use a dense schedule of reinforcement if you are just teaching a brand new skill

What are the stages of learning?: Acquisition, fluency, generalization, maintenance and then adaptation LEFT OFF HERE

What do we know about the schedules of reinforcement?: The earlier in learning, the more reinforcement. The later in learning, the less the reinforcement

The earlier in learning…: The earlier in learning, the more the reinforcement. The later in learning, the less the reinforcement.

What do we need to know before reinforcement?: Before attempting reinforcement, we need to conduct a reinforcer assessment.

What should we do when we fade reinforcers?: When we fade reinforcers, we do so gradually. Then we fade the reinforcers to the natural level. Hopefully the behavior itself becomes automatically reinforced.

What do we fade reinforcers to?: We fade reinforcers to the natural level. Hopefully the behavior itself becomes automatically reinforced.

What schedule of reinforcement results in the most stable, high rates of behavior?: The variable ratio schedule of reinforcement results in the most stable, high rates of behavior.

What should you do when you have to teach a child a new skill that they don’t have at all?: When you have to teach a child a new skill that they don’t have at all, you use shaping

What do you do when you’re shaping a behavior?: You use differential reinforcement when you’re shaping a behavior

What is differential reinforcement?: Differential reinforcement is when you start to reinforce a behavior that is better and then stop reinforcing the behavior that you were reinforcing prior to that. In other words, a new level is reinforced and the old level is put on extinction.

Shaping: When you teach a child a new skill, you use shaping. You utilize something that the child already has in their tool kit and you gradually reform it to the targeted skill. In the end, you don’t want any additional prompting or any additional steps in the behavior topography that you put into your objectives.

If you have a bunch of skills but the child isn’t putting them together into a complex behavior, what should you do?: If you have a bunch of skills but the child isn’t putting them together into a complex behavior, you should use chaining.

Chaining: At the end of chaining, the child should be able to do it independently without prompts.

Types of chaining: Forward chaining, backwards chaining, total task prompt

What do you have to consider when you’re deciding what type of chaining to use?: When you’re deciding what type of chaining to use, you have to ask yourself various questions. How frustrated is the child? How difficult is the task? How potent is the reinforcer?

How do we deal with reinforcer potency?

We know things can interfere with our reinforcer, we know that competing contingencies might be in place and that can interfere with our treatment program

What could possibly interfere with our treatment program?: We know that things can interfere with our reinforcer and we know that competing contingencies might be in place. This could interfere with our treatment program.

What is a behavior contingency?: In a contingency statement, the consequence of the possible act can also be some behavior. These tend to be If Then statements. For example, if Joe plays his drums at night, the neighbors might complain. Another example would be if you feed the dog at the table during our meals, he will often come begging during our meals.

If Joe plays his drums at night, the neighbors might complain. What is this an example of?: If Joe plays his drums at night, the neighbors might complain. Since this is an “If → Then” statement that shows the consequence of the possible act, this would be a contingency.

If you feed the dog at the table during our meals, he will often come begging during our meals. What is this an example of?: If you feed the dog at the table during our meals, he will often come begging during our meals. This is an example of a contingency. It is an “If → Then” statement that illustrates the consequence of the possible act.

What are competing contingencies?: Competing contingencies are part of learning theories. It refers to the person learning about the likelihood that a response will occur by doing something else. For example, if someone hands the individual a bottle of water before they walk to the refrigerator, there is no need for the individual to complete the task on their own. This noncontingent delivery of water competes with the contingency you planned to evoke the behaviors needed to get the bottle of water.

If someone hands the individual a bottle of water before he walks to the refrigerator, there is no need for the individual to complete the task on their own. What is this an example of?: If someone hands the individual a bottle of water before they walk to the refrigerator, there is no need for the individual to complete the task on their own. This is an example of competing contingencies. The noncontingent delivery of water competes with the contingency you planned to evoke the behaviors needed to get the bottle of water.

Premack Principle: The Premack principle states that more probable behaviors will reinforce less probable behaviors. For example, a child may be incentivized to do low probability behavior such as homework if they are told they will do a high probability activity like play with their toys afterwards. This is also known as Grandma’s Rule.

What is Grandma’s Rule?: Grandma's Rule is another term used to refer to the Premack Principle. The Premack Principle states that more probable behaviors will reinforce less probable behaviors. For example, a child may be incentivized to do low probability behavior such as homework if they are told they will do a high probability activity like playing with their toys afterwards.

A child may be incentivized to do low probability behavior such as homework if they are told they will do a high-probability activity like playing with their toys afterwards. What does this demonstrate?: A child may be incentivized to do low probability behavior such as homework if they are told they will do a high probability activity like playing with their toys afterwards. This demonstrates the Premack’s Principle (Think of Grandma’s Law. “If you eat veggies, you can eat my homemade cookies.”)

Establishing operations can make it easier for us

If there are abolishing operations, it could make it more difficult for us to get the child to demonstrate the skill with the materials that we are using

We need to make sure that our reinforcers are free from the abolishing operations

How do we get the child to do the behavior when we’re doing shaping?: Prompt hierarchies, physical interventions, most to least (when they can’t do it at all or have no clue how to do it) , least to most (see if they can do it themselves before offering guidance)

COMPARE MOST TO LEAST AND LEAST TO MOST

FADE YOUR PROMPTS FAST TO GET TO NATURAL DISCRIMINATIVE STIMULUS

SD is a stimulus that evokes a certain behavior to either get or avoid a certain consequence

A red light or a red stoplight would be a ___ device for use to stop our cars: A red light or a red stoplight would be a stimulus control device for us to stop our cars

If there are situations in which the contingencies are

If you see a stimulus and there’s no normal response (or there isn’t the normal response) to behavior in that situation, it might be a stimulus change situation

LOOK INTO THE PROCEDURES NEEDED FOR THIS

- we discriminate when we do a behavior or when we don’t do a behavior

- Generalize behavior across people places and things

PROBLEMS MODULE 8

- Antecedent control: Setting up the situation to prevent the behavior from happening next time

- Timers, 4 Cs of prevention, Surface management techniques, social stories, setting up the environment to prevent the behavior from being needed

- Set up opportunities for the child to set up a way to get their needs met without resorting to problem behavior

- DRO, DRI, DRA, DRL Reinforcement Strategies

- Functions of Behavior: ESCAPE ATTENTION MAKE A DEMAND SELF STIMULATION

- How do we determine functions of behavior

- How do we respond to functions of behavior

- Replace the problem behavior with an alternative way of meeting the respective function

MODULE 9 BEHAVIOR REDUCTION

Hierarchy

Overcorrection

Water mist

Contingent Excercise

Ammonia inhalants

Electric shocks

TAKE DATA ALL THE TIME FOR PUNISHMENT OTHERWISE IT’S UNETHICAL IF BEHAVIOR IS NOT GOING DOWN

DISCRETE TRIAL PROS AND CONS

HISTORY OF DISCRETE TRIALS

HOW YOU SET UP DISCRETE TRIALS

Stimulus Equivalence: A way of teaching concepts so you don’t have to explain every single word

“Teaching the relationship between lowercase and capital Cat.” → Stimulus Equivalence

PECS incorporated in incidental teaching, chaining, discrimination

Give me two examples of functions of behavior

What is the difference between incidental teaching and discrete trial?

Incidental teaching is child led, both start with an easy response, both use something that’ s easy for the child, they also ____

Why is it important to target generalization in therapy?: So they can apply it across multiple environments. The child should be able to do it in the natural environment and not just in therapy.

What are things to consider when using tangible reinforcers?: Satiation and access outside of the training environment. The cost. Replacement items. Establishing operations. Can they get it in other places?

Dimensions of behavior are FRAT DIL

frequency, rate, accuracy, topography, duration, intensity, latency