Probability

Uncertainty:

observed variables (evidence)

unobserved variables

model

agent knows something about how the known variables relate to the unknown variables

Random variables:

some aspect of the world about which we (may) have uncertainty

R = is it raining?

T = is it hot or cold?

D = how long does it take to drive to work?

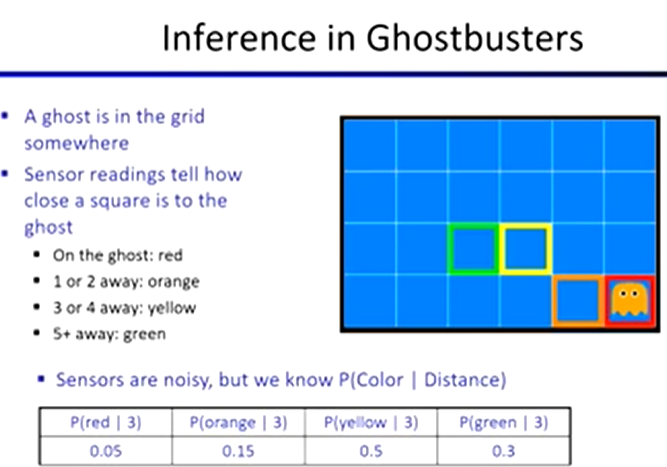

L = where is the ghost?

We denote with capital letters

Have domains

R = {true, false}

T = {hot, cold}

D = {0, infinity}

L = {(0,0), (2, 3)}

Probability Distributions

associate a probability with each value

unobserved random variables have distributions

ex. P(T): T P Where P(Hot) = 0.5

Hot 0.5

Cold 0.5

Joint Distributions

over a set of random variables: X1, X2, … Xn

specifies a real number for each assignment (or outcome)

P(X1 = x1, X2 = x2 … Xn = xn OR P(x1, x2 …, xn)

Size of distribution if n variables with domain size d?

dn

Probabilistic Models

a joint distribution over a set of random variables

(Random) variables with domains

assignments are called outcomes

say whether assignments are likely

normalized: sum to 1

Events:

a set of E outcomes

P(E) = ∑ P(x1, x2 …, xn)

from a joint distribution, we can calculate the probability of any event

Marginal Distributions

sub-tables which eliminate variables

Marginalization (summing out)

combining collapsed rows by adding

Conditional Probabilities

simple relation between joint and conditional probabilities

P(a | b) = P(a, b) / P(b)

Conditional Distributions

probability distributions over some variables given fixed values of others

ex. P(W | T = hot)

capital letter is an array of all the values

Normalization Trick

Step 1: select the joint probabilities matching the evidence

Step 2: normalize the selection (make it sum to 1) by dividing

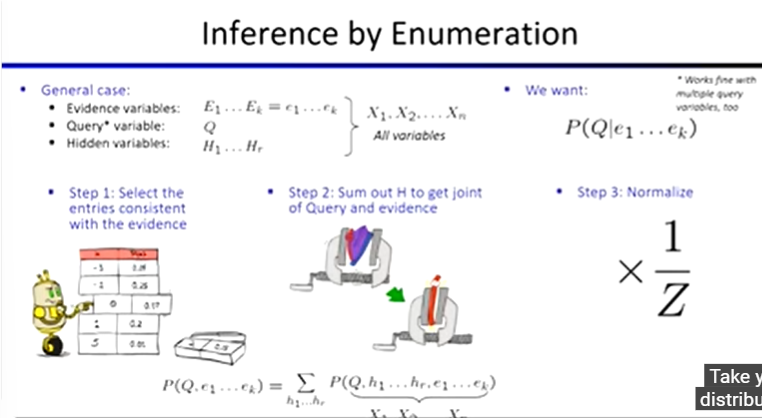

Probabilistic Inference:

compute a desired probability from other known probabilities

generally compute conditional probabilities

probabilities change with new evidence

The Product Rule

P(y)P(x | y) = P(x, y)

The Chain Rule

P(x1, x2, x3) = P(x1)P(x2 | x1)P(x3 | x1 ⋅ x2)

Bayes Rule

P(x, y) = P(x | y)P(y) = P(y | x)P(x)

P(x | y) = [ P(y | x) / P(y) ] * P(x)